Lei Xie is a Professor at Northwestern Polytechnical University, where he leads the Audio, Speech and Language Processing Lab (ASLP@NPU). His research focuses on speech processing, conversational AI, and neural models for speech and language technologies, with work spanning speech enhancement, automatic speech recognition, and speech synthesis.

He is also committed to building open-source tools and data resources for the research community, including the widely used WeNet toolkit and the WenetSpeech open-data series.

Professor Xie has published over 400 papers, received more than 18,000 Google Scholar citations, and has an H-index of 63. His work has received multiple best paper awards, won international challenge championships, and has been translated into industrial applications. He currently serves as Vice Chairperson of ISCA SIG-CSLP and Senior Area Editor for IEEE/ACM TASLP and IEEE SPL.

Full Biography

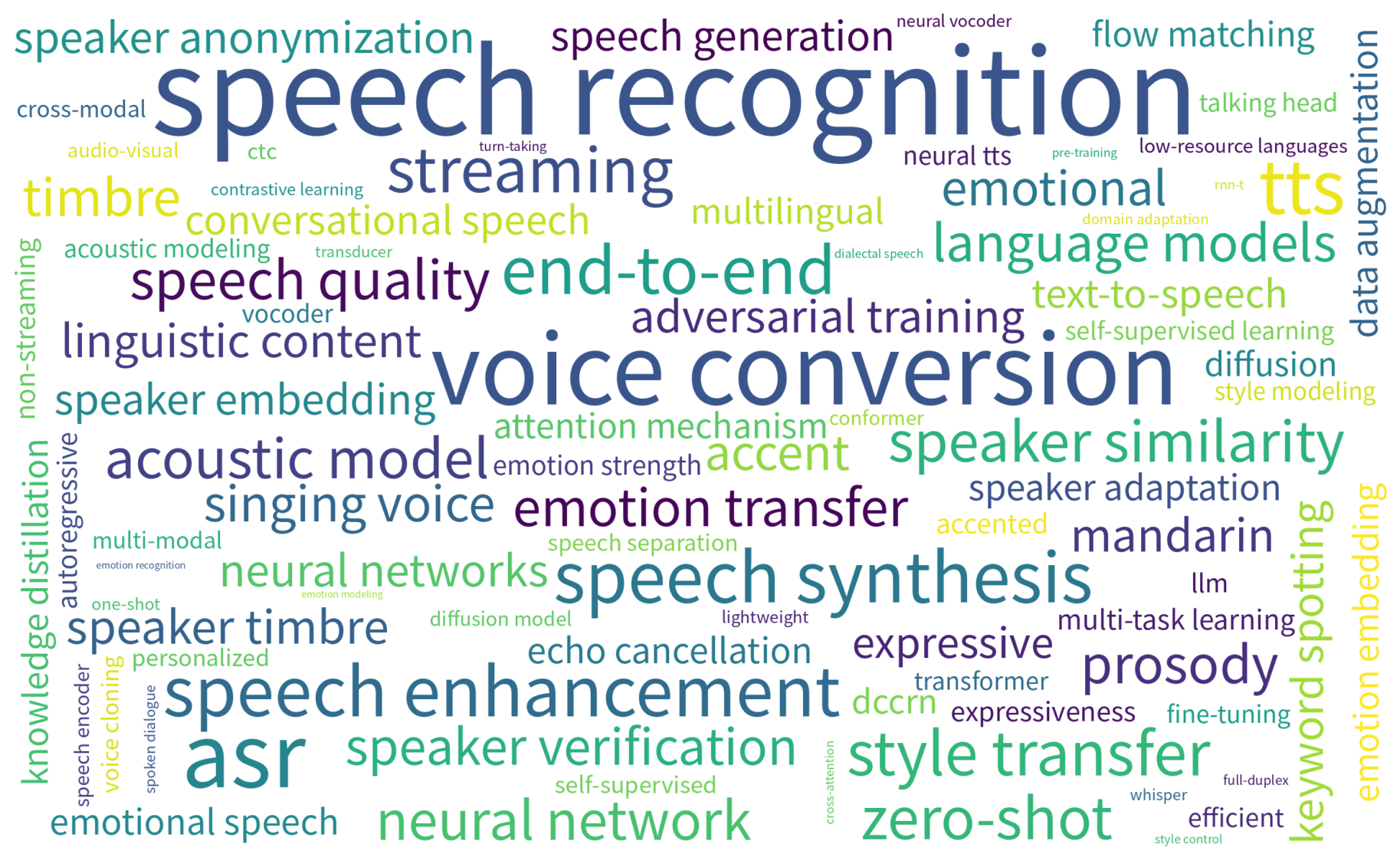

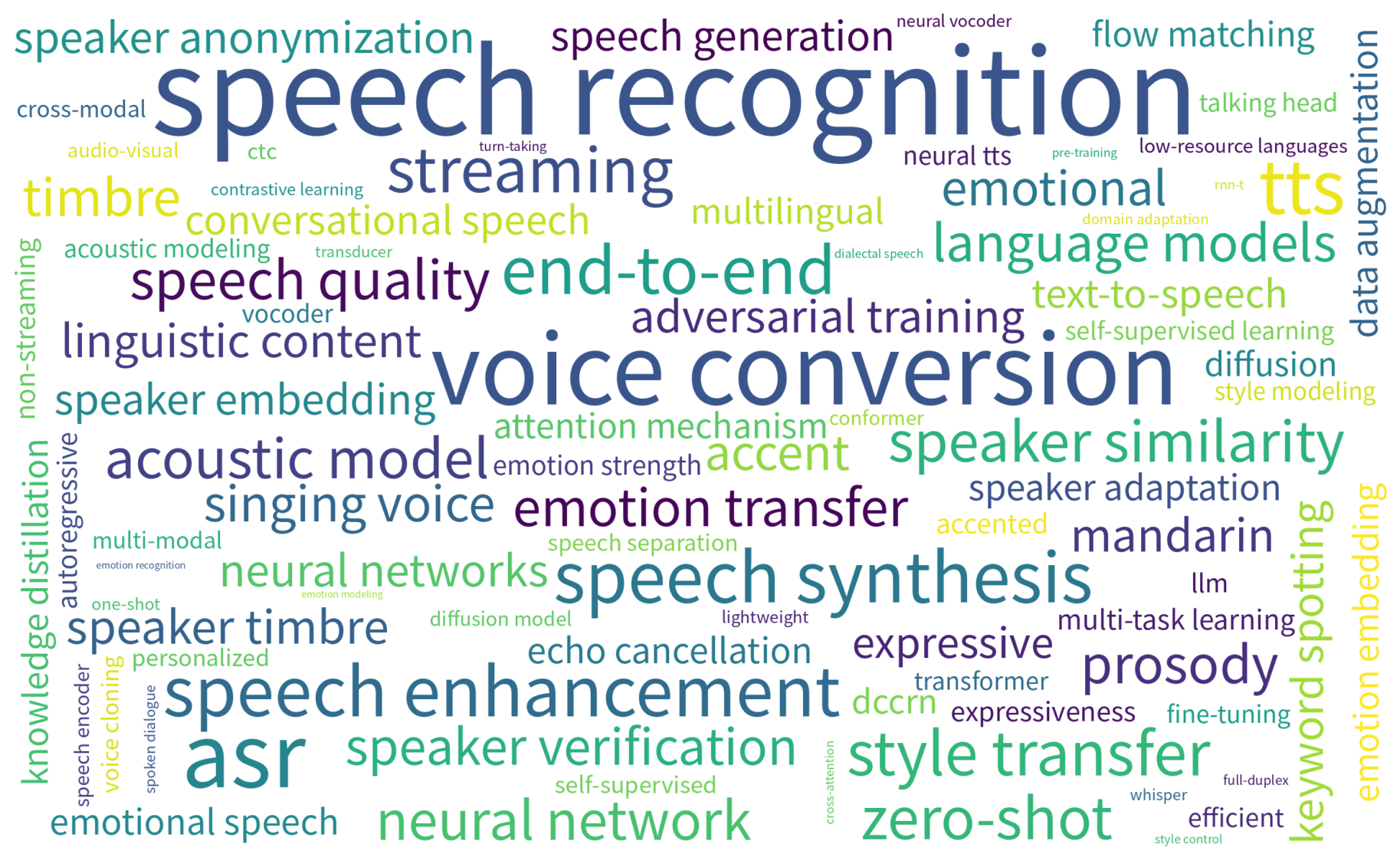

Lei Xie is a Professor at the School of Computer Science, Northwestern Polytechnical University (NPU), where he leads the Audio, Speech and Language Processing Lab (ASLP@NPU). His research focuses on speech processing, conversational AI, advanced neural models for speech and language technologies and large audio/speech language models, with contributions spanning speech enhancement, automatic speech recognition, speech synthesis and spoken dialogue systems.

He is also committed to advancing open-source research infrastructure for the community, leading projects such as the widely used WeNet speech recognition toolkit and the WenetSpeech open-data series.

Dr. Xie received his Ph.D. in Computer Engineering from NPU, where his doctoral research focused on speech recognition. Before joining NPU as a faculty member, he held research positions at Vrije Universiteit Brussel, City University of Hong Kong, and The Chinese University of Hong Kong.

He has received several honors and recognitions, including the New Century Excellent Talents Program of the Ministry of Education of China, the Shaanxi Young Science and Technology Star Award, recognition as one of the World’s Top 2% Scientists (Stanford University & Elsevier), and the title of Huawei Cloud AI Distinguished Teacher.

Professor Xie has published over 400 peer-reviewed papers in audio, speech, and language processing, with more than 18,000 citations on Google Scholar and an H-index of 63. His work has received multiple best paper awards at international conferences and won several international challenge championships. A number of his research outcomes have also been successfully translated into real-world industrial applications.

At ASLP@NPU, he mentors a diverse group of students and researchers working at the intersection of speech, audio, and language intelligence. He is also an active contributor to the research community, serving in leadership and editorial roles. He currently serves as Vice Chairperson of the ISCA Special Interest Group on Chinese Spoken Language Processing (SIG-CSLP) and as Senior Area Editor for both IEEE/ACM Transactions on Audio, Speech, and Language Processing and IEEE Signal Processing Letters.

News

| May 19, 2026 |

Together with the WeNet open-source community, we have released S2Accompanist, winning the Efficiency track at ICME 2026 ATTM with a lightweight 402M-parameter model!

|

| May 19, 2026 |

We are excited to announce that the 2nd Challenge on Multilingual Conversational Speech Language Models (MLC-SLM) features a total prize pool of USD 20,000. Join the challenge and win!

|

| Apr 10, 2026 |

We are pleased to announce the IEEE SLT 2026 SmartGlasses Challenge on Egocentric Speech Interaction for AI Glasses.

|

| Apr 10, 2026 |

The 2026 master’s cohort graduated successfully and joined top companies such as Alibaba, Tencent, and JD.com. Congratulations!

|

| Apr 07, 2026 |

WenetSpeech-Wu - The largest Wu Chinese dataset to date, accepted by ACL2026.

|

| Apr 07, 2026 |

LLM-forced Aligner, the technology behind Qwen3-Qwen/Qwen3-ForcedAligner, accepted by ACL2026

|

| Apr 05, 2026 |

Congratulations to Dr. Jijun Yao on receiving the Tencent Qingyun Program offer and joining Tencent!

|

| Mar 17, 2026 |

4 papers accepted by ICME2026

|

| Jan 18, 2026 |

8 papers accepted by ICASSP2026

|

| Jan 08, 2026 |

VoiceSculptor, a voice design model, now open-sourced

|

Lab

The Audio, Speech and Language Processing Lab (ASLP@NPU), led by Prof. Lei Xie at Northwestern Polytechnical University, is widely recognized as one of the leading research groups in speech, audio, and language technologies. The lab conducts cutting-edge research spanning speech recognition, speech synthesis, speech enhancement, spoken dialogue systems, and emerging audio language models, with a strong commitment to both scientific innovation and real-world impact.

ASLP@NPU places equal emphasis on research excellence and practical deployment, and has maintained close and long-term collaborations with industry. Many of its research outcomes have been successfully translated into real applications, while its open-source platforms and data resources — including WeNet and WenetSpeech — have been widely adopted by both academia and industry.

The lab has also played an important role in cultivating talent for the broader AI and speech community, with many alumni becoming technical leaders, senior researchers, and key engineering contributors in leading technology companies and research institutions.

By combining academic depth, engineering strength, and industrial relevance, ASLP@NPU continues to advance the frontier of speech intelligence and next-generation human–machine communication.

Recent Popular Open-source Projects

-

SoulX-Podcast — Inference codebase for generating high-fidelity podcasts from text with multi-speaker multi-dialect support

-

DiffRhythm — End-to-end full-length song generation via latent diffusion

-

OSUM — Open speech understanding model for limited academic resources

-

SongEval — Aesthetic evaluation toolkit for generated songs

-

WenetSpeech-Yue — Large-scale Cantonese speech corpus with multi-dimensional annotation

-

MeanVC — Lightweight and streaming zero-shot voice conversion via mean flows

-

VoiceSculptor — Instruct text-to-speech solution based on LLaSA and CosyVoice2

-

WenetSpeech-Chuan — Large-scale Sichuanese dialect speech corpus

-

DiffRhythm2 — Efficient high-fidelity song generation via block flow matching

-

WenetSpeech-Wu-Repo — Large-scale Wu dialect speech corpus with multi-dimensional annotation

-

SongFormer — Ultra-Fast, Ultra-Accurate Music Structure Analysis Tool

Recent Publications

-

Summary on The Multilingual Conversational Speech Language Model Challenge: Datasets, Tasks, Baselines, and Methods

Bingshen

Mu, Pengcheng

Guo, Zhaokai

Sun, Shuai

Wang, Hexin

Liu, Mingchen

Shao, and

5 more authors

In ICASSP, 2026

This paper summarizes the Interspeech2025 Multilingual Conversational Speech Language Model (MLC-SLM) challenge, which aims to advance the exploration of building effective multilingual conversational speech LLMs (SLLMs). We provide a detailed description of the task settings for the MLC-SLM challenge, the released real-world multilingual conversational speech dataset totaling approximately 1,604 hours, and the baseline systems for participants. The MLC-SLM challenge attracts 78 teams from 13 countries to participate, with 489 valid leaderboard results and 14 technical reports for the two tasks. We distill valuable insights on building multilingual conversational SLLMs based on submissions from participants, aiming to contribute to the advancement of the community.

-

WenetSpeech-Chuan: A Large-Scale Sichuanese Corpus with Rich Annotation for Dialectal Speech Processing

Yuhang

Dai, Ziyu

Zhang, Shuai

Wang, Longhao

Li, Zhao

Guo, Tianlun

Zuo, and

10 more authors

In ICASSP, 2026

The scarcity of large-scale, open-source data for dialects severely hinders progress in speech technology, a challenge particularly acute for the widely spoken Sichuanese dialects of Chinese. To address this critical gap, we introduce WenetSpeech-Chuan, a 10,000-hour, richly annotated corpus constructed using our novel Chuan-Pipeline, a complete data processing framework for dialectal speech. To facilitate rigorous evaluation and demonstrate the corpus’s effectiveness, we also release high-quality ASR and TTS benchmarks, WenetSpeech-Chuan-Eval, with manually verified transcriptions. Experiments show that models trained on WenetSpeech-Chuan achieve state-of-the-art performance among open-source systems and demonstrate results comparable to commercial services. As the largest open-source corpus for Sichuanese dialects, WenetSpeech-Chuan not only lowers the barrier to research in dialectal speech processing but also plays a crucial role in promoting AI equity and mitigating bias in speech technologies. The corpus, benchmarks, models, and receipts are publicly available on our project page.

-

Towards Building Speech Large Language Models for Multitask Understanding in Low-Resource Languages

Mingchen

Shao, Bingshen

Mu, Chengyou

Wang, Hai

Li, Ying

Yan, Zhonghua

Fu, and

1 more author

In ICASSP, 2026

Speech large language models (SLLMs) built on speech encoders, adapters, and LLMs demonstrate remarkable multitask understanding performance in high-resource languages such as English and Chinese. However, their effectiveness substantially degrades in low-resource languages such as Thai. This limitation arises from three factors: (1) existing commonly used speech encoders, like the Whisper family, underperform in low-resource languages and lack support for broader spoken language understanding tasks; (2) the ASR-based alignment paradigm requires training the entire SLLM, leading to high computational cost; (3) paired speech-text data in low-resource languages is scarce. To overcome these challenges in the low-resource language Thai, we introduce XLSR-Thai, the first self-supervised learning (SSL) speech encoder for Thai. It is obtained by continuously training the standard SSL XLSR model on 36,000 hours of Thai speech data. Furthermore, we propose U-Align, a speech-text alignment method that is more resource-efficient and multitask-effective than typical ASR-based alignment. Finally, we present Thai-SUP, a pipeline for generating Thai spoken language understanding data from high-resource languages, yielding the first Thai spoken language understanding dataset of over 1,000 hours. Multiple experiments demonstrate the effectiveness of our methods in building a Thai multitask-understanding SLLM. We open-source XLSR-Thai and Thai-SUP to facilitate future research.

-

MeanVC: Lightweight and Streaming Zero-Shot Voice Conversion via Mean Flows

Guobin

Ma, Jixun

Yao, Ziqian

Ning, Yuepeng

Jiang, Lingxin

Xiong, Lei

Xie, and

1 more author

In ICASSP, 2026

Zero-shot voice conversion (VC) aims to transfer timbre from a source speaker to any unseen target speaker while preserving linguistic content. Growing application scenarios demand models with streaming inference capabilities. This has created a pressing need for models that are simultaneously fast, lightweight, and high-fidelity. However, existing streaming methods typically rely on either autoregressive (AR) or non-autoregressive (NAR) frameworks, which either require large parameter sizes to achieve strong performance or struggle to generalize to unseen speakers. In this study, we propose MeanVC, a lightweight and streaming zero-shot VC approach. MeanVC introduces a diffusion transformer with a chunk-wise autoregressive denoising strategy, combining the strengths of both AR and NAR paradigms for efficient streaming processing. By introducing mean flows, MeanVC regresses the average velocity field during training, enabling zero-shot VC with superior speech quality and speaker similarity in a single sampling step by directly mapping from the start to the endpoint of the flow trajectory. Additionally, we incorporate diffusion adversarial post-training to mitigate over-smoothing and further enhance speech quality. Experimental results demonstrate that MeanVC significantly outperforms existing zero-shot streaming VC systems, achieving superior conversion quality with higher efficiency and significantly fewer parameters. Audio demos and code are publicly available at https://aslp-lab.github.io/MeanVC.

-

S²Voice: Style-Aware Autoregressive Modeling with Enhanced Conditioning for Singing Style Conversion

Ziqian

Wang, Xianjun

Xia, Chuanzeng

Huang, and Lei

Xie

In ICASSP, 2026

We present S^2Voice, the winning system of the Singing Voice Conversion Challenge (SVCC) 2025 for both the in-domain and zero-shot singing style conversion tracks. Built on the strong two-stage Vevo baseline, S^2Voice advances style control and robustness through several contributions. First, we integrate style embeddings into the autoregressive large language model (AR LLM) via a FiLM-style layer-norm conditioning and a style-aware cross-attention for enhanced fine-grained style modeling. Second, we introduce a global speaker embedding into the flow-matching transformer to improve timbre similarity. Third, we curate a large, high-quality singing corpus via an automated pipeline for web harvesting, vocal separation, and transcript refinement. Finally, we employ a multi-stage training strategy combining supervised fine-tuning (SFT) and direct preference optimization (DPO). Subjective listening tests confirm our system’s superior performance: leading in style similarity and singer similarity for Task 1, and across naturalness, style similarity, and singer similarity for Task 2. Ablation studies demonstrate the effectiveness of our contributions in enhancing style fidelity, timbre preservation, and generalization. Audio samples are available \footnotehttps://honee-w.github.io/SVC-Challenge-Demo/.

-

The ICASSP 2026 Automatic Song Aesthetics Evaluation Challenge

Guobin

Ma, Yuxuan

Xia, Jixun

Yao, Huixin

Xue, Hexin

Liu, Shuai

Wang, and

2 more authors

In ICASSP, 2026

This paper summarizes the ICASSP 2026 Automatic Song Aesthetics Evaluation (ASAE) Challenge, which focuses on predicting the subjective aesthetic scores of AI-generated songs. The challenge consists of two tracks: Track 1 targets the prediction of the overall musicality score, while Track 2 focuses on predicting five fine-grained aesthetic scores. The challenge attracted strong interest from the research community and received numerous submissions from both academia and industry. Top-performing systems significantly surpassed the official baseline, demonstrating substantial progress in aligning objective metrics with human aesthetic preferences. The outcomes establish a standardized benchmark and advance human-aligned evaluation methodologies for modern music generation systems.

-

The ICASSP 2026 HumDial Challenge: Benchmarking Human-like Spoken Dialogue Systems in the LLM Era

Zhixian

Zhao, Shuiyuan

Wang, Guojian

Li, Hongfei

Xue, Chengyou

Wang, Shuai

Wang, and

10 more authors

In ICASSP, 2026

Driven by the rapid advancement of Large Language Models (LLMs), particularly Audio-LLMs and Omni-models, spoken dialogue systems have evolved significantly, progressively narrowing the gap between human-machine and human-human interactions. Achieving truly “human-like” communication necessitates a dual capability: emotional intelligence to perceive and resonate with users’ emotional states, and robust interaction mechanisms to navigate the dynamic, natural flow of conversation, such as real-time turn-taking. Therefore, we launched the first Human-like Spoken Dialogue Systems Challenge (HumDial) at ICASSP 2026 to benchmark these dual capabilities. Anchored by a sizable dataset derived from authentic human conversations, this initiative establishes a fair evaluation platform across two tracks: (1) Emotional Intelligence, targeting long-term emotion understanding and empathetic generation; and (2) Full-Duplex Interaction, systematically evaluating real-time decision-making under “ listening-while-speaking” conditions. This paper summarizes the dataset, track configurations, and the final results.

-

Easy Turn: Integrating Acoustic and Linguistic Modalities for Robust Turn-Taking in Full-Duplex Spoken Dialogue Systems

Guojian

Li, Chengyou

Wang, Hongfei

Xue, Shuiyuan

Wang, Dehui

Gao, Zihan

Zhang, and

5 more authors

In ICASSP, 2026

Full-duplex interaction is crucial for natural human-machine communication, yet remains challenging as it requires robust turn-taking detection to decide when the system should speak, listen, or remain silent. Existing solutions either rely on dedicated turn-taking models, most of which are not open-sourced. The few available ones are limited by their large parameter size or by supporting only a single modality, such as acoustic or linguistic. Alternatively, some approaches finetune LLM backbones to enable full-duplex capability, but this requires large amounts of full-duplex data, which remain scarce in open-source form. To address these issues, we propose Easy Turn, an open-source, modular turn-taking detection model that integrates acoustic and linguistic bimodal information to predict four dialogue turn states: complete, incomplete, backchannel, and wait, accompanied by the release of Easy Turn trainset, a 1,145-hour speech dataset designed for training turn-taking detection models. Compared to existing open-source models like TEN Turn Detection and Smart Turn V2, our model achieves state-of-the-art turn-taking detection accuracy on our open-source Easy Turn testset. The data and model will be made publicly available on GitHub.

-

KALL-E:Autoregressive Speech Synthesis with Next-Distribution Prediction

Kangxiang

Xia, Xinfa

Zhu, Jixun

Yao, Wenjie

Tian, Wenhao

Li, and Lei

Xie

In AAAI, 2026

We introduce KALL-E, a novel autoregressive (AR) language model for text-to-speech (TTS) synthesis that operates by predicting the next distribution of continuous speech frames. Unlike existing methods, KALL-E directly models the continuous speech distribution conditioned on text, eliminating the need for any diffusion-based components. Specifically, we utilize a Flow-VAE to extract a continuous latent speech representation from waveforms, instead of relying on discrete speech tokens. A single AR Transformer is then trained to predict these continuous speech distributions from text, optimizing a Kullback-Leibler divergence loss as its objective. Experimental results demonstrate that KALL-E achieves superior speech synthesis quality and can even adapt to a target speaker from just a single sample. Importantly, KALL-E provides a more direct and effective approach for utilizing continuous speech representations in TTS.

-

Hearing More with Less: Multi-Modal Retrieval-and-Selection Augmented Conversational LLM-Based ASR

Bingshen

Mu, Hexin

Liu, Hongfei

Xue, Kun

Wei, and Lei

Xie

In AAAI, 2026

Automatic Speech Recognition (ASR) aims to convert human speech content into corresponding text. In conversational scenarios, effectively utilizing context can enhance its accuracy. Large Language Models’ (LLMs) exceptional long-context understanding and reasoning abilities enable LLM-based ASR (LLM-ASR) to leverage historical context for recognizing conversational speech, which has a high degree of contextual relevance. However, existing conversational LLM-ASR methods use a fixed number of preceding utterances or the entire conversation history as context, resulting in significant ASR confusion and computational costs due to massive irrelevant and redundant information. This paper proposes a multi-modal retrieval-and-selection method named MARS that augments conversational LLM-ASR by enabling it to retrieve and select the most relevant acoustic and textual historical context for the current utterance. Specifically, multi-modal retrieval obtains a set of candidate historical contexts, each exhibiting high acoustic or textual similarity to the current utterance. Multi-modal selection calculates the acoustic and textual similarities for each retrieved candidate historical context and, by employing our proposed near-ideal ranking method to consider both similarities, selects the best historical context. Evaluations on the Interspeech 2025 Multilingual Conversational Speech Language Model Challenge dataset show that the LLM-ASR, when trained on only 1.5K hours of data and equipped with the MARS, outperforms the state-of-the-art top-ranking system trained on 179K hours of data.

-

WenetSpeech-Yue: A Large-scale Cantonese Speech Corpus with Multi-dimensional Annotation

Longhao

Li, Zhao

Guo, Hongjie

Chen, Yuhang

Dai, Ziyu

Zhang, Hongfei

Xue, and

12 more authors

In AAAI, 2026

The development of speech understanding and generation has been significantly accelerated by the availability of large-scale, high-quality speech datasets. Among these, ASR and TTS are regarded as the most established and fundamental tasks. However, for Cantonese (Yue Chinese), spoken by approximately 84.9 million native speakers worldwide, limited annotated resources have hindered progress and resulted in suboptimal ASR and TTS performance. To address this challenge, we propose WenetSpeech-Pipe, an integrated pipeline for building large-scale speech corpus with multi-dimensional annotation tailored for speech understanding and generation. It comprises six modules: Audio Collection, Speaker Attributes Annotation, Speech Quality Annotation, Automatic Speech Recognition, Text Postprocessing and Recognizer Output Voting, enabling rich and high-quality annotations. Based on this pipeline, we release WenetSpeech-Yue, the first large-scale Cantonese speech corpus with multi-dimensional annotation for ASR and TTS, covering 21,800 hours across 10 domains with annotations including ASR transcription, text confidence, speaker identity, age, gender, speech quality scores, among other annotations. We also release WSYue-eval, a comprehensive Cantonese benchmark with two components: WSYue-ASR-eval, a manually annotated set for evaluating ASR on short and long utterances, code-switching, and diverse acoustic conditions, and WSYue-TTS-eval, with base and coverage subsets for standard and generalization testing. Experimental results show that models trained on WenetSpeech-Yue achieve competitive results against state-of-the-art (SOTA) Cantonese ASR and TTS systems, including commercial and LLM-based models, highlighting the value of our dataset and pipeline.

-

Steering Language Model to Stable Speech Emotion Recognition via Contextual Perception and Chain of Thought

Zhixian

Zhao, Xinfa

Zhu, Xinsheng

Wang, Shuiyuan

Wang, Xuelong

Geng, Wenjie

Tian, and

1 more author

IEEE/ACM Transactions on Audio, Speech, and Language Processing, 2025

Large-scale audio language models (ALMs), such as Qwen2-Audio, are capable of comprehending diverse audio signal, performing audio analysis and generating textual responses. However, in speech emotion recognition (SER), ALMs often suffer from hallucinations, resulting in misclassifications or irrelevant outputs. To address these challenges, we propose C^2SER, a novel ALM designed to enhance the stability and accuracy of SER through Contextual perception and Chain of Thought (CoT). C^2SER integrates the Whisper encoder for semantic perception and Emotion2Vec-S for acoustic perception, where Emotion2Vec-S extends Emotion2Vec with semi-supervised learning to enhance emotional discrimination. Additionally, C^2SER employs a CoT approach, processing SER in a step-by-step manner while leveraging speech content and speaking styles to improve recognition. To further enhance stability, C^2SER introduces self-distillation from explicit CoT to implicit CoT, mitigating error accumulation and boosting recognition accuracy. Extensive experiments show that C^2SER outperforms existing popular ALMs, such as Qwen2-Audio and SECap, delivering more stable and precise emotion recognition. We release the training code, checkpoints, and test sets to facilitate further research.

-

FPO: Fine-grained Preference Optimization Improves Zero-shot Text-to-Speech

Jixun

Yao, Yuguang

Yang, Yu

Pan, Yuan

Feng, Ziqian

Ning, Jianhao

Ye, and

2 more authors

IEEE/ACM Transactions on Audio, Speech, and Language Processing, 2025

Integrating human feedback to align text-to-speech (TTS) system outputs with human preferences has proven to be an effective approach for enhancing the robustness of language model-based TTS systems. Current approaches primarily focus on using preference data annotated at the utterance level. However, frequent issues that affect the listening experience often only arise in specific segments of audio samples, while other segments are well-generated. In this study, we propose a fine-grained preference optimization approach (FPO) to enhance the robustness of TTS systems. FPO focuses on addressing localized issues in generated samples rather than uniformly optimizing the entire utterance. Specifically, we first analyze the types of issues in generated samples, categorize them into two groups, and propose a selective training loss strategy to optimize preferences based on fine-grained labels for each issue type. Experimental results show that FPO enhances the robustness of zero-shot TTS systems by effectively addressing local issues, significantly reducing the bad case ratio, and improving intelligibility. Furthermore, FPO exhibits superior data efficiency compared with baseline systems, achieving similar performance with fewer training samples.

-

EchoFree: Towards Ultra Lightweight and Efficient Neural Acoustic Echo Cancellation

Xingchen

Li, Boyi

Kang, Ziqian

Wang, Zihan

Zhang, Mingshuai

Liu, Zhonghua

Fu, and

1 more author

In ASRU, 2025

In recent years, neural networks (NNs) have been widely applied in acoustic echo cancellation (AEC). However, existing approaches struggle to meet real-world low-latency and computational requirements while maintaining performance. To address this challenge, we propose EchoFree, an ultra lightweight neural AEC framework that combines linear filtering with a neural post filter. Specifically, we design a neural post-filter operating on Bark-scale spectral features. Furthermore, we introduce a two-stage optimization strategy utilizing self-supervised learning (SSL) models to improve model performance. We evaluate our method on the blind test set of the ICASSP 2023 AEC Challenge. The results demonstrate that our model, with only 278K parameters and 30 MMACs computational complexity, outperforms existing low-complexity AEC models and achieves performance comparable to that of state-of-the-art lightweight model DeepVQE-S. The audio examples are available.

-

XEmoRAG: Cross-Lingual Emotion Transfer with Controllable Intensity Using Retrieval-Augmented Generation

Tianlun

Zuo, Jingbin

Hu, Yuke

Li, Xinfa

Zhu, Hai

Li, Ying

Yan, and

3 more authors

In ASRU, 2025

Zero-shot emotion transfer in cross-lingual speech synthesis refers to generating speech in a target language, where the emotion is expressed based on reference speech from a different source language. However, this task remains challenging due to the scarcity of parallel multilingual emotional corpora, the presence of foreign accent artifacts, and the difficulty of separating emotion from language-specific prosodic features. In this paper, we propose XEmoRAG, a novel framework to enable zero-shot emotion transfer from Chinese to Thai using a large language model (LLM)-based model, without relying on parallel emotional data. XEmoRAG extracts language-agnostic emotional embeddings from Chinese speech and retrieves emotionally matched Thai utterances from a curated emotional database, enabling controllable emotion transfer without explicit emotion labels. Additionally, a flow-matching alignment module minimizes pitch and duration mismatches, ensuring natural prosody. It also blends Chinese timbre into the Thai synthesis, enhancing rhythmic accuracy and emotional expression, while preserving speaker characteristics and emotional consistency. Experimental results show that XEmoRAG synthesizes expressive and natural Thai speech using only Chinese reference audio, without requiring explicit emotion labels. These results highlight XEmoRAG’s capability to achieve flexible and low-resource emotional transfer across languages. Our demo is available at https://tlzuo-lesley.github.io/Demo-page/ .

-

Llasa+: Free Lunch for Accelerated and Streaming Llama-Based Speech Synthesis

Wenjie

Tian, Xinfa

Zhu, Hanke

Xie, Zhen

Ye, Wei

Xue, and Lei

Xie

In ASRU, 2025

Recent progress in text-to-speech (TTS) has achieved impressive naturalness and flexibility, especially with the development of large language model (LLM)-based approaches. However, existing autoregressive (AR) structures and large-scale models, such as Llasa, still face significant challenges in inference latency and streaming synthesis. To deal with the limitations, we introduce Llasa+, an accelerated and streaming TTS model built on Llasa. Specifically, to accelerate the generation process, we introduce two plug-and-play Multi-Token Prediction (MTP) modules following the frozen backbone. These modules allow the model to predict multiple tokens in one AR step. Additionally, to mitigate potential error propagation caused by inaccurate MTP, we design a novel verification algorithm that leverages the frozen backbone to validate the generated tokens, thus allowing Llasa+ to achieve speedup without sacrificing generation quality. Furthermore, we design a causal decoder that enables streaming speech reconstruction from tokens. Extensive experiments show that Llasa+ achieves a 1.48X speedup without sacrificing generation quality, despite being trained only on LibriTTS. Moreover, the MTP-and-verification framework can be applied to accelerate any LLM-based model. All codes and models are publicly available at https://github.com/ASLP-lab/LLaSA_Plus.

-

Efficient Scaling for LLM-based ASR

Bingshen

Mu, Yiwen

Shao, Kun

Wei, Dong

Yu, and Lei

Xie

In ASRU, 2025

Large language model (LLM)-based automatic speech recognition (ASR) achieves strong performance but often incurs high computational costs. This work investigates how to obtain the best LLM-ASR performance efficiently. Through comprehensive and controlled experiments, we find that pretraining the speech encoder before integrating it with the LLM leads to significantly better scaling efficiency than the standard practice of joint post-training of LLM-ASR. Based on this insight, we propose a new multi-stage LLM-ASR training strategy, EFIN: Encoder First Integration. Among all training strategies evaluated, EFIN consistently delivers better performance (relative to 21.1% CERR) with significantly lower computation budgets (49.9% FLOPs). Furthermore, we derive a scaling law that approximates ASR error rates as a computation function, providing practical guidance for LLM-ASR scaling.

-

DiffRhythm+: Controllable and Flexible Full-Length Song Generation with Preference Optimization

Huakang

Chen, Yuepeng

Jiang, Guobin

Ma, Chunbo

Hao, Shuai

Wang, Jixun

Yao, and

4 more authors

In ASRU, 2025

Songs, as a central form of musical art, exemplify the richness of human intelligence and creativity. While recent advances in generative modeling have enabled notable progress in long-form song generation, current systems for full-length song synthesis still face major challenges, including data imbalance, insufficient controllability, and inconsistent musical quality. DiffRhythm, a pioneering diffusion-based model, advanced the field by generating full-length songs with expressive vocals and accompaniment. However, its performance was constrained by an unbalanced model training dataset and limited controllability over musical style, resulting in noticeable quality disparities and restricted creative flexibility. To address these limitations, we propose DiffRhythm+, an enhanced diffusion-based framework for controllable and flexible full-length song generation. DiffRhythm+ leverages a substantially expanded and balanced training dataset to mitigate issues such as repetition and omission of lyrics, while also fostering the emergence of richer musical skills and expressiveness. The framework introduces a multi-modal style conditioning strategy, enabling users to precisely specify musical styles through both descriptive text and reference audio, thereby significantly enhancing creative control and diversity. We further introduce direct performance optimization aligned with user preferences, guiding the model toward consistently preferred outputs across evaluation metrics. Extensive experiments demonstrate that DiffRhythm+ achieves significant improvements in naturalness, arrangement complexity, and listener satisfaction over previous systems.

-

REF-VC: Robust, Expressive and Fast Zero-Shot Voice Conversion with Diffusion Transformers

Yuepeng

Jiang, Ziqian

Ning, Shuai

Wang, Chengjia

Wang, Mengxiao

Bi, Pengcheng

Zhu, and

2 more authors

In ASRU, 2025

In real-world voice conversion applications, environmental noise in source speech and user demands for expressive output pose critical challenges. Traditional ASR-based methods ensure noise robustness but suppress prosody richness, while SSL-based models improve expressiveness but suffer from timbre leakage and noise sensitivity. This paper proposes REF-VC, a noise-robust expressive voice conversion system. Key innovations include: (1) A random erasing strategy to mitigate the information redundancy inherent in SSL features, enhancing noise robustness and expressiveness; (2) Implicit alignment inspired by E2TTS to suppress non-essential feature reconstruction; (3) Integration of Shortcut Models to accelerate flow matching inference, significantly reducing to 4 steps. Experimental results demonstrate that REF-VC outperforms baselines such as Seed-VC in zero-shot scenarios on the noisy set, while also performing comparably to Seed-VC on the clean set. In addition, REF-VC can be compatible with singing voice conversion within one model.

-

Enhancing Non-Core Language Instruction-Following in Speech LLMs via Semi-Implicit Cross-Lingual CoT Reasoning

Hongfei

Xue, Yufeng

Tang, Hexin

Liu, Jun

Zhang, Xuelong

Geng, and Lei

Xie

In ACM MM, 2025

Large language models have been extended to the speech domain, leading to the development of speech large language models (SLLMs). While existing SLLMs demonstrate strong performance in speech instruction-following for core languages (e.g., English), they often struggle with non-core languages due to the scarcity of paired speech-text data and limited multilingual semantic reasoning capabilities. To address this, we propose the semi-implicit Cross-lingual Speech Chain-of-Thought (XS-CoT) framework, which integrates speech-to-text translation into the reasoning process of SLLMs. The XS-CoT generates four types of tokens: instruction and response tokens in both core and non-core languages, enabling cross-lingual transfer of reasoning capabilities. To mitigate inference latency in generating target non-core response tokens, we incorporate a semi-implicit CoT scheme into XS-CoT, which progressively compresses the first three types of intermediate reasoning tokens while retaining global reasoning logic during training. By leveraging the robust reasoning capabilities of the core language, XS-CoT improves responses for non-core languages by up to 45% in GPT-4 score when compared to direct supervised fine-tuning on two representative SLLMs, Qwen2-Audio and SALMONN. Moreover, the semi-implicit XS-CoT reduces token delay by more than 50% with a slight drop in GPT-4 scores. Importantly, XS-CoT requires only a small amount of high-quality training data for non-core languages by leveraging the reasoning capabilities of core languages. To support training, we also develop a data pipeline and open-source speech instruction-following datasets in Japanese, German, and French.

Professional Services

Awards

- 3rd Place, Single Track, Interspeech 2026 Audio Reasoning Challenge

- 1st Place, In-Domain Singing Style Conversion Track, ASRU 2025 The Singing Voice Conversion Challenge

- 1st Place, Zero-Shot Singing Style Conversion Track, ASRU 2025 The Singing Voice Conversion Challenge

- 1st Place, General Audio Source Separation Track, NCMMSC 2025 CCF Advanced Audio Technology Competition

- 2nd Place, Target Speaker Lipreading Track, ICME 2024 Chat-scenario Chinese Lipreading (ChatCLR) Challenge

- 1st Place, Source Speaker Verification Against Voice Conversion Track, SLT 2024 Source Speaker Tracing Challenge(SSTC)

- 1st Place, ICASSP 2024 Packet Loss Concealment (PLC) Challenge

- 2nd Place, Real-time Track, ICASSP 2024 Speech Signal Improvement Challenge

- 3rd Place, Non-real-time Track, ICASSP 2024 Speech Signal Improvement Challenge

- 2nd Place, ICASSP 2024 Multimodal Information based Speech Processing (MISP) Challenge

- 1st Place, 2024 Shenghua Cup Acoustic Technology Competition

- 1st Place, Single-Speaker VSR Track, NCMMSC 2024 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, Multi-Speaker VSR Track, NCMMSC 2024 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, SLT 2024 Low-Resource Dysarthria Wake-Up Word Spotting Challenge(LRDWWS Challenge)

- 1st Place, Speech-to-Speech Translation (Offline) Track, ACL 2023 Speech-to-Speech Translation (S2ST)

- 1st Place, Any-to-one, In-domain Singing Voice Conversion Track, ASRU 2023 The Singing Voice Conversion Challenge

- 2nd Place, Any-to-one, Cross-domain Singing Voice Conversion Track, ASRU 2023 The Singing Voice Conversion Challenge

- 2nd Place, Audio-Visual Target Speaker Extraction (AVTSE) Track, ICASSP 2023 Multi-modal Information based Speech Processing (MISP) Challenge

- 1st Place, UDASE (Unsupervised Domain Adaptation for Speech Enhancement) Track, Interspeech 2023 CHiME Speech Separation and Recognition Challenge (CHiME-7)

- 1st Place, Non-personalized AEC Track, ICASSP 2023 Acoustic Echo Cancellation Challenge (AEC Challenge)

- 2nd Place, Personalized AEC Track, ICASSP 2023 Acoustic Echo Cancellation Challenge (AEC Challenge)

- 2nd Place, Audio-Visual Diarization & Recognition Track, ICASSP 2023 Multimodal Information based Speech Processing (MISP) - Challenge

- 3rd Place, Audio-Visual Speaker Diarization Track, ICASSP 2023 Multimodal Information based Speech Processing (MISP) Challenge

- 1st Place, Headset Speech Enhancement Track, ICASSP 2023 Deep Noise Suppression Challenge

- 1st Place, Speakerphone Speech Enhancement Track, ICASSP 2023 Deep Noise Suppression Challenge

- 1st Place, Speech Enhancement Track, 2023 Shenghua Cup Acoustic Technology Competition

- 1st Place, ASRU 2023 MultiLingual Speech processing Universal PERformance Benchmark (SUPERB)

- 1st Place, Single-Speaker VSR Track, NCMMSC 2023 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, Multi-Speaker VSR Track, NCMMSC 2023 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, Speaker Anonymization Track, Interspeech 2022 VoicePrivacy 2022 Challenge (VPC 2022)

- 2nd Place, Fully-supervised Track, Interspeech 2022 Far-field Speaker Verification Challenge (FFSVC)

- 2nd Place, Semi-supervised Track, Interspeech 2022 Far-field Speaker Verification Challenge (FFSVC)

- 2nd Place, ISCSLP 2022 Magichub Code-Switching ASR Challenge

- 3rd Place, ISCSLP 2022 Conversational Short-phrase Speaker Diarization Challenge

- 1st Place, Constrained Track, O-COCOSDA 2022 Indic Multilingual Speaker Verification Challenge (I-MSV)

- 3rd Place, Unconstrained Track, O-COCOSDA 2022 Indic Multilingual Speaker Verification Challenge (I-MSV)

- 3rd Place, NCMMSC 2022 Low-resource Mongolian Text-to-Speech Challenge

- 2nd Place, Training with VoxCeleb 1/2 Only Track, VoxSRC 2021 Workshop 2021 VoxCeleb Speaker Recognition Challenge (VoxSRC)

- 2nd Place, Additional Public Data Allowed (e.g., MUSAN, RIR) Track, VoxSRC 2021 Workshop 2021 VoxCeleb Speaker Recognition - Challenge (VoxSRC)

- 3rd Place, Real-Time Wideband Speech Enhancement Track, Interspeech 2021 Deep Noise Suppression Challenge (DNS Challenge)

- 3rd Place, Real-Time AEC & Speech Enhancement Track, Interspeech 2021 Acoustic Echo Cancellation Challenge (AEC Challenge)

- 1st Place, Close-talking Single-channel Track, ISCSLP 2021 Personalized Voice Trigger Challenge (PVTC)

- 1st Place, Real-Time Wideband Speech Enhancement Track, Interspeech 2020 Deep Noise Suppression Challenge (DNS Challenge)

- 2nd Place, Non-Real-Time Wideband Speech Enhancement Track, Interspeech 2020 Deep Noise Suppression Challenge (DNS Challenge)

- 1st Place, Closed-set Word-level Audio-Visual Speech Recognition Track, ICMI 2019 Mandarin Audio-Visual Speech Recognition - Challenge

- 3rd Place, Interspeech 2018 CHiME Speech Separation and Recognition Challenge (CHiME-5)

- 2nd Place, Unsupervised Subword Unit Modeling Track, Interspeech 2017 Zero Resource Speech Challenge

- 1st Place, Spoken Term Discovery Track, Interspeech 2015 Zero Resource Speech Challenge

- 1st Place, QUESST (Query-by-Example Speech Search) Track, MediaEval Multimedia Benchmark Workshop 2015 Query-by-Example Search on Speech Task (QUESST)

- 2nd Place, QUESST (Query-by-Example Speech Search) Track, MediaEval Multimedia Benchmark Workshop 2014 Query-by-Example Search on Speech Task (QUESST)